3D breakfast from Federico Zannier on Vimeo.

Tuesday, November 6, 2012

3D scanner

Drawing with a Kinect

Using OpenFramewoks, the challenge for this assignment was to find the 'fore point' from the Kinect's Depth Image and treating it as the tip of a pencil.

I've merged the ribbon example by James Jorge with the Kinect point cloud example by Kyle McDonald.

Hand drawing with a Kinect from Federico Zannier on Vimeo.

Saturday, November 3, 2012

Week 1. Apple.

For the first week's assignment ("make a 3d scanner"), Manuela and I decided to scan and render an apple, taking two approaches.

1) Milk Scan (non invasive)

We cut an apple in half, put it in a container, and covered it with milk in layers as tall as a sheet of acrylic that we planned to use to reconstruct it later. Of course this was a serious scientific experiment, and as you can see in the photos we marked registration points, measured the layers of milk, and made sure that the table was level.

The one problem that we had –the lighter lower half of the apple started to float–, we fixed by punching metal nails into it. So the scan became quite invasive after all...

Here is a sample of the photos we took:

Once we had the images, we processed them to get the outlines to send to the laser cutter, but since the machine was down, we continued with our second plan.

2) Knife Scan (invasive)

For our second scan we cut an apple in thin slices, lit from below so that the details of the texture were visible:

Then we created a gif animation of it:

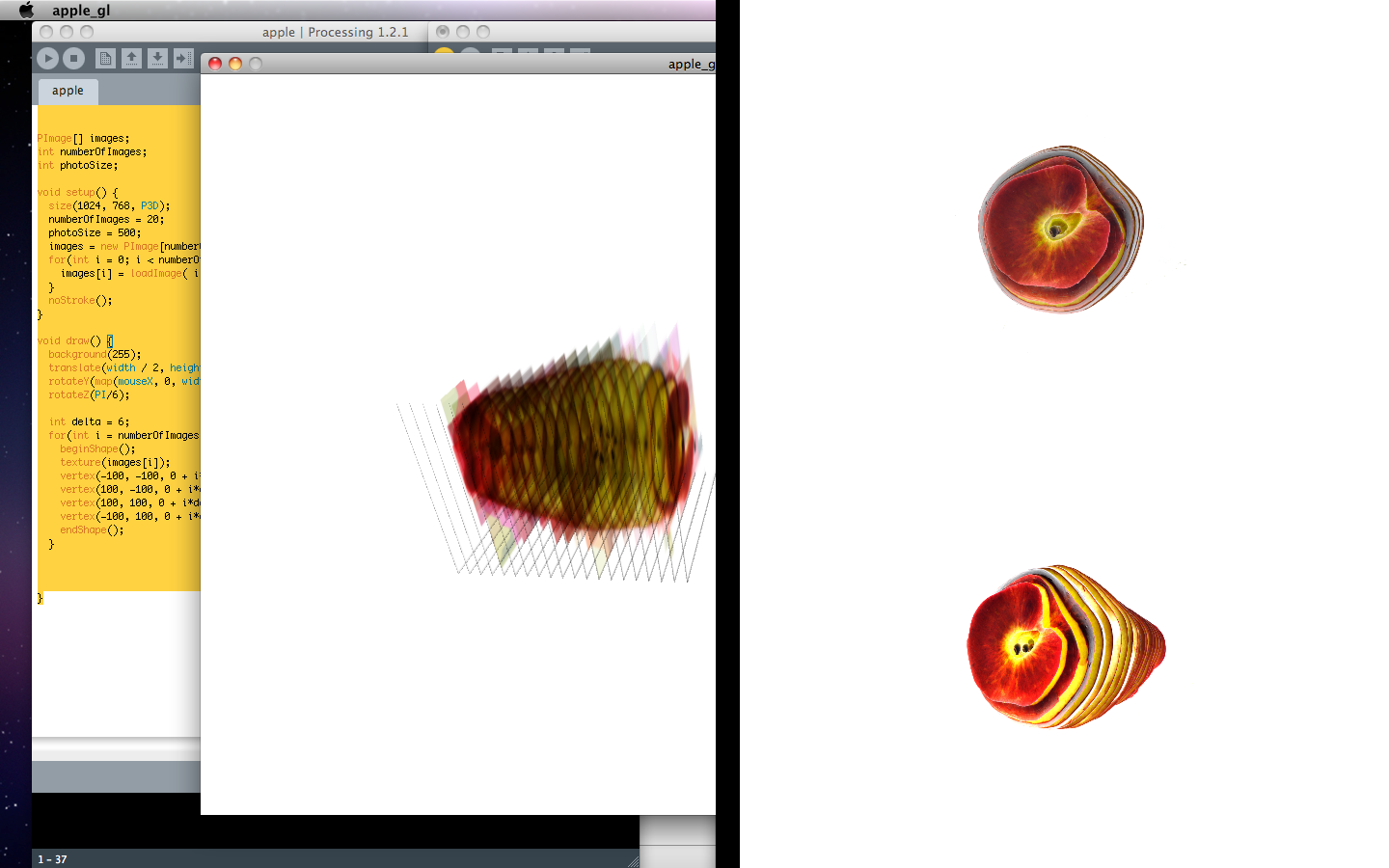

, made a Processing sketch to reconstruct it:

, and finally recreated the effect using Kyle's Slices Example code, from the Appropriating New Technologies class):

Friday, October 19, 2012

Close Encounters of the Schlossberg Kind

testing my alien communication device from r k schlossberg on Vimeo.

AND ACTIONCLOSE ENCOUNTERS OF THE SCHLOSSBERG KIND: V002 from r k schlossberg on Vimeo.

Thursday, October 18, 2012

Transparent screen

I'm thinking doing it in real time, using a kinect to capture both 3d model and texture. Then I can move the view point backward in virtual word, render that on screen and merge the scenes seamlessly. Also object between screen and kinect will be invisible.

The concept is there but some technical limitation restricts the performance. First, the perspective of kinect is too wide, making the needed part in very low resolution. Also a single kinect can not capture all information we need to render a vivid image. Maybe multiple cameras will be needed.